|

This document describes how to configure jumbo Maximum Transition Unit (MTU) end-to-end across Cisco Data Center devices in a network that consists of a VMware ESXi host installed on the Cisco Unified Computing System (UCS), Cisco Nexus 1000V Series Switches (N1kV), Cisco Nexus 5000 Series Switches (N5k), and the Cisco NetApp controller.

ContentsIntroductionPrerequisitesRequirements

Cisco recommends that you have knowledge of these topics:

Components Used

The information in this document is based on these software and hardware versions:

Turn off facebook popup notifications chrome. May 1, 2019 - If for any reason you don't want to change Google Chrome settings, you can disable Facebook notifications from the Facebook Website.

The information in this document was created from the devices in a specific lab environment. All of the devices used in this document started with a cleared (default) configuration. If your network is live, make sure that you understand the potential impact of any command or packet capture setup.

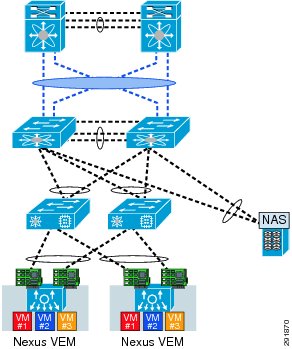

ConfigureNetwork Diagram

The typical iSCSI Storage Area Network (SAN) deployment uses the Cisco UCS with a Fabric Interconnect in Ethernet End Host mode and the storage target connected through an upstream switch or switched network.

Through the use of the Appliance ports on the UCS, Storage can be directly connected to the Fabric Interconnects.

Whether the upstream network is 1 GbE or 10 GbE, the use of jumbo frames (an MTU size of 9000, for example) improves performance because it reduces the number of individual frames that must be sent for a given amount of data and reduces the need to separate iSCSI data blocks into multiple Ethernet frames. They also lower the host and storage CPU utilization.

If jumbo frames are used, you must ensure that the UCS and storage target, as well as all of the network equipment between, are able and configured in order to support the larger frame size. This means that the jumbo MTU must be configured end-to-end (initiator to target) in order for it to be effective across the domain.

Here is an overview of the procedure that is used in order to configure the jumbo MTU end-to-end:

Note: Reference the Cisco Unified Computing System (UCS) Storage Connectivity Options and Best Practices with NetApp Storage Cisco article for additional information.

Cisco UCS Configuration

The MTU is set on a per-Class of Service (CoS) basis within the UCS. If you do not have a QoS policy defined for the vNIC that heads toward the vSwitch, then the traffic moves to the Best-Effort Class.

Complete these steps in order to enable jumbo frames:

Verify

Verify that the vNIC has the MTU configured as previously described.

Verify that the uplink ports have jumbo MTU enabled.

N5k Configuration

With the N5k, jumbo MTU is enabled at the system level.

Open a command prompt and enter these commands in order to configure the system for jumbo MTU:

Verify

Enter the show queuing interface Ethernet x/y command in order to verify that jumbo MTU is enabled:

Note: The show interface Ethernet x/y command shows an MTU of 1500, but that is incorrect.

VMware ESXi Configuration

You can configure the MTU value of a vSwitch so that all of the port-groups and ports use jumbo frames.

Complete these steps in order to enable jumbo frames on a host vSwitch:

Complete these steps in order to enable jumbo frames only on a VMkernel port from the vCenter server:

Verify

Enter the vmkping -d -s 8972 <storage appliance ip address> Reik ft wisin y yandel duele. command in order to test the network connectivity and verify that the VMkernel port can ping with jumbo MTU.

Tip: Reference the Testing VMkernel network connectivity with the vmkping command VMware article for more information about this command.

Note: The largest true packet size is 8972, which sends a 9000-byte packet when you add the IP and ICMP header bytes.

At the ESXi host level, verify that the MTU settings are configured properly:

Cisco IOS Configuration

With Cisco IOS® switches, there is no concept of global MTU at the switch level. Instead, MTU is configured at the interface/ether-channel level.

Enter these commands in order to configure jumbo MTU:

Verify

Enter the show interfaces gigabitEthernet 1/1 command in order to verify that the configuration is correct:

N1kV Configuration

With the N1kV, the jumbo MTU can only be configured on the Ethernet port-profiles for uplink; MTU cannot be configured at the vEthernet interface.

Verify

Enter the show run port-profile UPLINK command in order to verify that the configuration is correct:

NetApp FAS 3240 Configuration

On the storage controller, the network ports that are connected to the Fabric Interconnect or to the Layer 2 (L2) switch must have jumbo MTU configured. Here is an example configuration:

Verify

Use this section in order to verify that the configuration is correct.

VerifyNetapp Jumbo Frames Enabled Light

The verification procedures for the configuration examples described in this document are provided in the respective sections.

Troubleshoot

There is currently no specific troubleshooting information available for this configuration.

Since iSCSI networks have been growing in popularity the past couple of years, more people have been trying to use jumbo frames to eek out a little more speed. This article is going to tell you how to test your jumbo frames after getting it configured.

The theory behind why raising the MTU will give better performance is fairly simple. (Please excuse my crude description as I tried to keep it very simple). Data is diced up into sections to make transmission easier. Normal networks split data in 1,500 byte segments. A frame is then put around that data to tell it where to go. If you start splitting that data into 9,000 byte segments, there will be fewer frames created. Theoretically this will give you less overhead, and slightly faster speeds.

There are three places that you could run into errors if you start changing MTU sizes. The switch, host computer, or client computer. Most of the time the host will be a SAN or iSCSI tape drive. The client will usually be a server accessing the SAN or tape drive. So to test connectivity we will try to ping everything, just like troubleshooting standard network connectivity issues. The difference is that we will be setting the -f and -L switches.

The -f switch tells the computer that you do not want you ping packet split up at all. The -L switch sets the size of the ping. Let’s try these out right now. Try to ping your router using those switches and a 1600 byte packet.

It will look similar to this: ping -f -L 1600 192.168.1.1

It should tell you that the Packet needs to be fragmented. If you lower the size to 1000 then it will ping successfully. Now let’s get onto you iSCSI network and try some larger file sizes.

First step will be to ping your switch with a large packet size and see if it works.

Here is a good example test: ping -f -L 5000 172.16.0.10

If it does not work then try a small packet. If that goes through then you have a discrepancy in MTU size.

If both Pings make it through the switch just fine you should try to ping the host device using the same commands. This will tell you where your packets are getting dropped, and hopefully save you a lot of time trouble shooting.

The Latest

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed